Fungible Factories

From Interchangeable Parts to Interchangeable Specs

This is the written version of a talk I gave at the OSU HAMMER Engineering Research Center in February 2026

Every engineering field has its utopias: biotechnology has the immortality drug and mind uploading, computer science has the godlike AI and perfect external memory, aerospace has the flying car, warp drive, and O’Neil cylinder. The alchemists had their philosopher’s stone.

In manufacturing, we have ours too — the Star Trek replicator and the von Neumann machine. Machines that can make anything, anywhere, even more of themselves. Machines that frictionlessly turn imagination into reality.

Like any utopia, these are asymptotes, destinations so far off that they may as well be impossible to reach in a finite time. But striving for them is nevertheless valuable. Striving for the philosopher’s stone gave us modern chemistry. We haven’t won the war on cancer, but many people are alive who wouldn’t otherwise be. And while the AI labs have not (yet) created a god, they have created some pretty incredible tools.

I want to lay out a potential manufacturing paradigm that would bring us one step toward the utopian manufacturing dream. I also want to argue why building this new paradigm is both timely and important for the US in 2026.

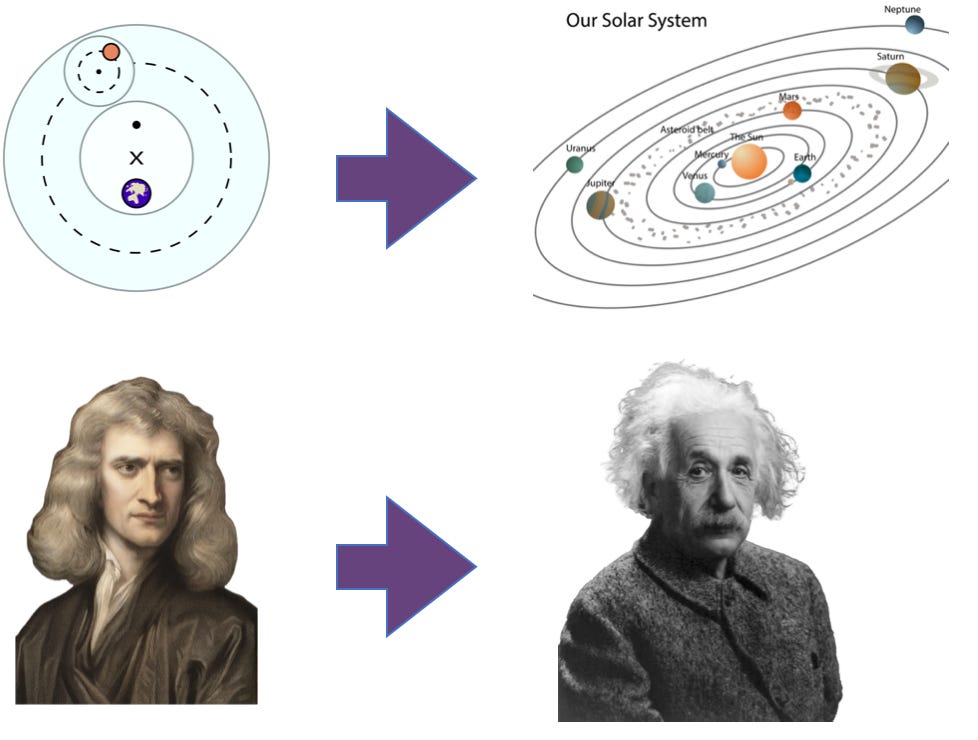

Note: I’m using the term “paradigm” in the sense of Thomas Kuhn’s philosophy of science: self-consistent ways of doing things or thinking about the world based on a set of assumptions that occasionally change in a “paradigm shift.” The classic example of shifting paradigms in science is the transition from the geocentric model of the universe, which assumed that all celestial bodies revolved around the Earth (potentially attached to crystal spheres), to a heliocentric model that assumes objects in our solar system revolve around the sun driven by gravity in a universe with no fixed “center.” The shift from Newtonian to Einsteinian physics is another.

We can extend this idea of paradigms to technology. The cleanest example of a manufacturing paradigm is interchangeable parts, built on the assumption that you make things as assemblies of parts that are identical to within some tolerance. That was not always true! For most of history, each part was hand-shaped to fit with all the other parts of a specific device. As late as the 1960s, interchangeable parts weren’t the default; they were expensive and only worth it if production volumes were high enough.

We currently live in a manufacturing paradigm unlocked by a world where sending information and goods is cheap thanks to the internet and containerization; statistics, liquid markets, and global trade have made just-in-time production possible. We could call it the “efficient networks” paradigm. This paradigm is full of assumptions about what’s possible and how things work. We don’t think about them much — living in a paradigm is like being a fish in water. Some of the assumptions include:

The process of prototyping a system is very different from the process of manufacturing it at scale.

A new product requires a new production line.

Each factory and production line is custom-built and unique, requiring untold engineering hours even if it’s highly automated

High volume, low mix is the way to make things cost-effectively. High volume, high mix is expensive.

Physical products have models that are updated once a year or more.

We can invalidate these assumptions.

If you can scale cost-effectively through parallelization, you can produce a system in the same way you prototyped it. If you can make things entirely with software-defined tools you don’t need to waste time and treasure building a new production line or factory for each product. These properties then drastically reduce the switching costs between different outputs, making costs agnostic to the number of things you’re making. All of this then makes creating physical products far more like software, where you can “push” updates to the next unit on the line as soon as it’s ready, drastically increasing the rate of improvement and learning.

I realize this is all incredibly abstract! It is hard to describe something that does not yet exist. So let me describe how making something within this paradigm might work.

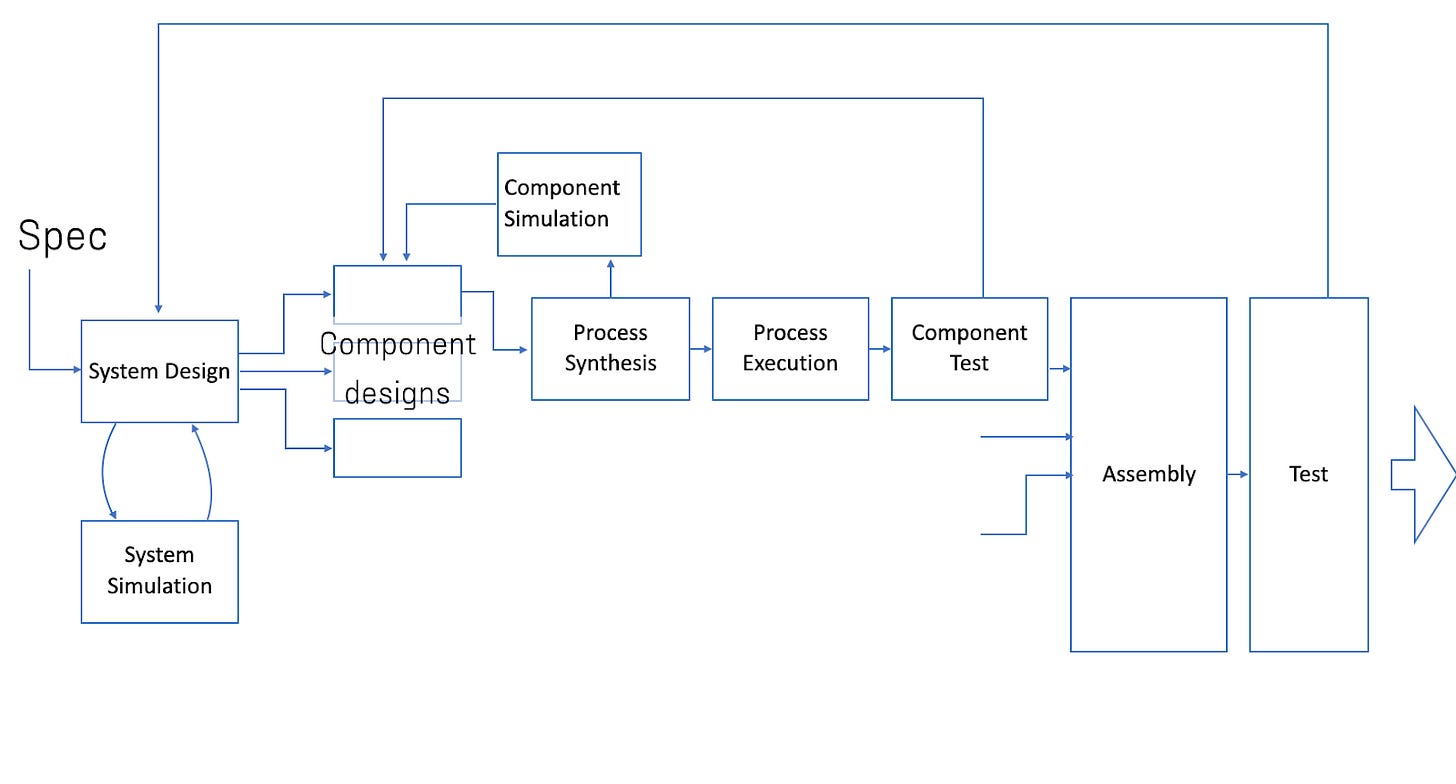

An engineer in the not-so-distant future will start by specifying a system they want to build like a drone or a robot. This will include not just CAD files of its structural components, but all the specs — force and torque, thermal characteristics, power consumption, all of it. (If we’re being even more ambitious, the specs could be much closer to the actual things you would want a robot to do similar to “unit testing” in software.) Software – let’s call it an AI agent for both the anthropomorphism and convenient handle to refer to it — takes a given design or creates one on its own and then simulates it to see if it can indeed hit specs and pass tests. If not, the agent feeds the failures back and updates the design until it does.

The agent then breaks the target system down into components and runs a similar simulation-design feedback loop for each of them. With the system broken down into notionally-correct components, the agent then simulates how each of them will actually be made — from the tool to the exact movements it needs to go through. The agent will then need to loop between this step and the component’s design (potentially even back to the system design) because as any good engineer knows, there is often a tight coupling between a component’s design, its properties, and how it’s made. The specific path by which you lay down carbon fiber has a huge effect on its strength; the orientation of layers in a 3D-printed part determines where it is strong or weak to shear forces. After all of these loops (and loops within loops) we still have not yet moved a single atom! But abundant, powerful compute is one of the biggest answers to “why now, what’s different?”

With a manufacturing plan in place, several shipping-crate-sized boxes slide together. They’re filled with software-defined machines (or at least the business ends of them): 3D printers, CNCs, and more that have not yet been invented. Actuators — some that look like robot arms, some more exotic — pull in raw materials and in a whirl of activity, the “cell” transforms them into a finished component. More tools swing into place that test the component against the specs the agent specified. If a component is unacceptable, data feeds back to agents who update the synthesis plan and even the part’s design to address the failed test’s root cause. After the synthesis loop completes each component, the actuators assemble the components into subsystems and then the full system — autonomously testing and updating until you have a verified functional robot and a verified production process.

This way of doing things opens many possibilities. When you want to increase production, you just activate more cells. Because the entire process is encoded in software and the hardware is identical, activating new cells is like installing a new program on a computer. The price of this hardware drops because you can scale its production. This transferability means that you can easily spin up production close to where you need the product whether that’s at the ends of the earth or in space. Automated testing loops drastically lower the overhead to customizing and prototyping. The same feedback loops can go one step farther and incorporate data from operation in the field directly back into the design — there are so many problems that pop up when a robot encounters the real world that no amount of testing can surface. Manufacturing infrastructure can rapidly switch from making factory robots to masks to military drones, if need be. These properties in turn will have many incredible second order effects — drastically lowering the overhead to developing physical products, manufacturing in space, robots of all shapes and sizes, and the list goes on.

There are two skeptical reactions to the picture I’ve tried to paint that I would like to briefly address: “this is an impossible utopian pipedream” and “this is basically already happening.” Both are wrong but both have a nugget of truth.

This new paradigm won’t happen tomorrow. We are not going to get there in one giant leap — we’ll make some things this way long before others. We’re in a world of mainframes and vacuum tubes talking about graphical user interfaces and laptops. It took more than a hundred years between the invention of interchangeable parts and their widespread use. It’s not even inevitable: work to create new paradigms requires navigating potentially-impossible open research problems, many market failures, and vested interests who don’t see why the investment is worthwhile or actively oppose it.

But the pieces are already out there: AI for different parts of the engineering stack, digital twins, training robots in simulation, and an explosion of tooling-free machines. Seeing this, you might even be tempted to extrapolate and believe that this new paradigm is basically inevitable. That’s also wrong! We have precursor pieces, yes, but currently they’re being used as point changes in the existing paradigm: the simulation-trained robots are installed in big fixed production lines, AI for CAD generates 2D drawings for contract manufacturers. Building the complete system that leverages them to their full potential is still a huge hurdle that will require entirely new frameworks and reinventing existing tools.

So where do we start? The domains that will benefit the most from this approach are ones in which the design and production of a part or assembly are deeply coupled. That is, if you want the thing to work, you need to think about how you’re going to make it while you’re designing it. Everything has this property to some extent: placing two holes half a millimeter apart is easy in CAD, but unless you’re incredibly careful, real physical metal will tear when you try to drill those holes. This is why design for manufacturing is a thing. This design-manufacturing coupling is especially tight in something like carbon-fiber parts or power electronics. Two carbon-fiber objects with the exact same shape can have drastically different properties depending on how the fibers were laid down and bonded. Those process-dependent properties are especially hard to simulate, so today carbon fiber parts depend heavily on tacit knowledge and iteration, which in turn drives up price, development time, and ultimately keeps people from using it.

From there we need to simultaneously develop general purpose connective tissue — the software architectures, agents, and robotic systems — and one-by-one add “skills” and tools like software-defined forging machines.

Let me close by explaining why this matters. At the highest level, creating new manufacturing paradigms matters because materials and manufacturing underpin civilization. Ultimately, we are limited by the stuff we can make things out of and our ability to turn that stuff into useful things. New manufacturing paradigms unlock new products and let us make existing ones cheaper, better, and faster.

This matters particularly now in early 2026, not just for abstract reasons, but because of two concrete trends reshaping our world: American reindustrialization and space.

Both the supply chain shocks of the COVID-19 pandemic and political tensions with the world’s largest manufacturer have made many people want to manufacture more things in the US. If you look at history, new paradigms drive shifts of manufacturing centers of gravity. Steam power drove a shift from mainland Europe to Britain; interchangeable parts (which were colloquially known as “the American System”) drove a shift from Britain to the US; just-in-time manufacturing drove a shift from the US to Japan; and network manufacturing drove a shift to China. In each situation, a new entrant who was previously looked down on as a low-quality manufacturing “backwater” scooped the dominant incumbent using a new approach. In economics (specifically disruption theory), new entrants don’t just displace incumbents by trying really hard (although that’s necessary too) — they take a new approach to the problem that leapfrogs the old one. Building minimills for processing steel, going straight to mobile, etc. If we’re going to manufacture things cost-effectively in the US, we’ll need to do the same thing. We’re not going to out-China China. Instead, we need to leapfrog them with a new paradigm that we can become uniquely good at.

Today, space is also on the mind. If Artemis ever gets off the ground it will send astronauts around the moon for the first time in more than half a century; there’s talk of nuclear power and a permanent base on the moon; and let’s not start with space datacenters. And frankly, it’s close to my own heart. I’m a card-carrying space nerd: my PhD work focused on space robotics, I worked at NASA during the summers, and my advisor was NASA’s chief technologist. If humanity is going to become a space-faring species, we will eventually need to make things in space — both to take advantage of the unique conditions in space and because it would be insane to launch every kilogram of mass we use there up from Earth. This new paradigm is uniquely well-suited to space manufacturing: launch once and manufacture anything with designs you can test on identical hardware and then “upload” from earth.

It’s hard to give this thing a name. We can talk about what it is: software-defined, closed loop, and modular. We can talk about what it isn’t: tooling-dependent, manual, or tied to specific locations or production lines. I’m torn between two potential names for this thing because there are two core pieces that I think will really unlock this paradigm:

The ability to “backpropagate” differences between a spec and measured performance data back to a design and manufacturing process. This suggests Differentiable Manufacturing.

The ability (partially dependent on #1) to run the same process on different pieces of hardware without extensive calibration. This suggests Compiled Manufacturing.

Every prior paradigm looked impractical until it wasn’t. Interchangeable parts were mocked as “the American System” because Europeans thought Americans too crude for real manufacturing. Toyota’s methods were “culturally specific” until they ate Detroit’s lunch. But we can make today’s assumptions as quaint as hand-fitting each musket. The pieces exist. The need is clear. What’s missing is the connective tissue that turns components into a system.

We’re actively working on this at Spectech! If you want to help support or get involved, please reach out.

Further Reading

Austin Vernon’ Speed Can Reindustrialize America

Brian Potter’s Is the Future “AWS for Everything”?

David Hounshell’s From the American System to Mass Production, 1800-1932: The Development of Manufacturing Technology in the United States

Dan Wang’s 2025 Letter

Ben Reinhardt’s The Art of Industrial Leapfrogging

Thanks to Austin Vernon and Brian Potter for reading drafts of this doc. Claude caught a few grammar mistakes, all em-dashes are Ben’s.

I feel somewhat conflicted about this--on the one hand, as a software nerd, the idea of bringing modularity into physical domains is super neat. On the other, it gives me a hint of 'solution in search of a problem'.

It would be useful to compare and contrast this to the evolution of the 3D printing space: there's a lot of initial promise, a tremendous speed up to product prototyping, some niche applications where it's well suited, but broadly failing to displace traditional high-volume manufacturing. Particularly, the 'high mix' use-case seems rarer in practice. Handy for prototyping, but once you've nailed it, you optimize the line and shave every penny off the high-volume part.

How would you see this approach emerging differently? I'd imagine that, if there is some cross-over point where sheer bulk parallelization overtakes traditional volume manufacturing, there's a large initial gulf. Cheaper than a prototyping firm, but still sufficiently more expensive than what an agile Chinese manufacturing center can turn around. What scales this across the gap?

If this did totally succeed, does that just move the value up the supply chain to the material processing? I don't see shipping-container-sized steel foundries or aluminum smelters being viable. Can this address American reindustrialization without tackling the supply chains? Right now, even if my factory were free, I think if I wanted to make, say, motors, it might actually be cheaper to buy a motor off Alibaba and break it into parts than to try to secure the magnets alone. Especially if I weren't capable of securing a single-part high-volume contract because my software-defined factory doesn't build anything specific in large quantities.

Anyway, I like the ideas, I've thought along similar lines in the past. Show me I'm wrong!

I think you have the wrong names on the pictures of the solar system. The pictures are of the geocentric and heliocentric models, associated with Ptolemy and Copernicus.